Don’t forget to listen to the most recent episode of my podcast, “What Is X?”, featuring Robin Dembroff on Gender, and what it is.

Are your reading skills getting a bit rusty? Listen to the audio version instead!

0.

The recent partial collapse of the Metaverse, when Facebook parent company Meta lost 26% of its market value (or $232,000,000,000) in a single day, as well as the proliferation of new images this triggered of the ongoing transformation of Mark Zuckerberg’s flesh-and-blood body into a dead-eyed simulacrum of the sort only billions of dollars can buy, appearing ever more as if made out of the same materials as Stretch Armstrong (Karo corn syrup, latex), seemed a good occasion to revisit, perhaps more compellingly than on my previous attempts, the so-called “simulation argument”: the idea that what we think is reality is in fact a “computer simulation”.

The other occasion is the publication of philosopher Dave Chalmers’s new book Reality+, whose title sounds like something you might also buy stock in, especially if you are banking on a future of increased technological mediation between human experience and the world, in which our very idea of what is to count as reality will be correspondingly less dependent on the old criterion that served us reasonably well for at least some centuries (even if it has by no means been the default view of human cultures in most places and time): that to be real is to be out there in the external world independently of our experience. I am reading Chalmers’s book right now; the present essay is not however a review of it, but only an acknowledgment that he has got me thinking again about some questions that had been sleeping peacefully in me since I finished my most recent book manuscript and sent it off and moved on, or back, to other things.

1.

I suppose I am basically Kantian about the idea of “the world”: I think what we perceive are only phenomena, and that it is experience itself that gives our perception the structure it has, while experience can in no way be said to resemble the world itself or to capture the true nature of reality. In that respect, all experience of reality is experience of “augmented” reality, whether you’re wearing goggles or not, and whatever the state of technology.

This means that in principle I see no reason at all not to take experiences grounded in the encounter of the conscious self with pixels or bits, rather than with midsize physical objects, as real. However, my willingness to go along with this elision does not compel me to accept the inversion some philosophers (as well as their Silicon Valley mécènes) seem to wish to make: that because all reality as given in experience is augmented reality, therefore reality itself, independently of experience, must be explicable by appeal to the same principles and mechanisms that underlie our most recent VR and AR technologies.

According to Chalmers’s construal of the “it-from-bit” hypothesis, to be digital is in itself no grounds for being excluded from reality, and what we think of as physical objects may be both real and digital. One is in fact free to accept the first conjunct, and reject the latter, even though they are presented as practically equivalent. I myself am prepared to accept that a couch in VR is a real couch — more precisely, a real digital couch, or at least that it may be real or reified in consequence of the way I relate to it. But this does not compel me to accept that the couch on which I am currently sitting is digital.

2.

There is a persistent conflation of these two points throughout discussions of the so-called “simulation argument”, which Chalmers treats in several of his works but which is most strongly associated with the name of Nick Bostrom, who in 2003 published an influential article entitled “Are You Living in a Computer Simulation?” You can read Bostrom’s argument for yourself and decide whether you find it convincing. Here I just want to point out one significant feature of it that occurs early in the introduction and that the author seems to hope the reader will pass over smoothly without getting hung up on the problems it potentially opens up. Consciousness, Bostrom maintains, might arise among simulated people if, first of all, “the simulations were sufficiently fine-grained”, and, second of all, “a certain quite widely accepted position in the philosophy of mind is correct.”

What is this widely accepted position, you ask? And is its wide acceptance sufficient reason to assume it without argument? It is, namely, the view, which Bostrom calls “substrate-independence”, that “mental states can supervene on any of a broad class of physical substrates. Provided a system implements the right sort of computational structures and processes, it can be associated with conscious experiences.” Arguments for functionalism or computationalism have been given in the literature, Bostrom notes, and “while it is not entirely uncontroversial, we shall here take it as a given.”

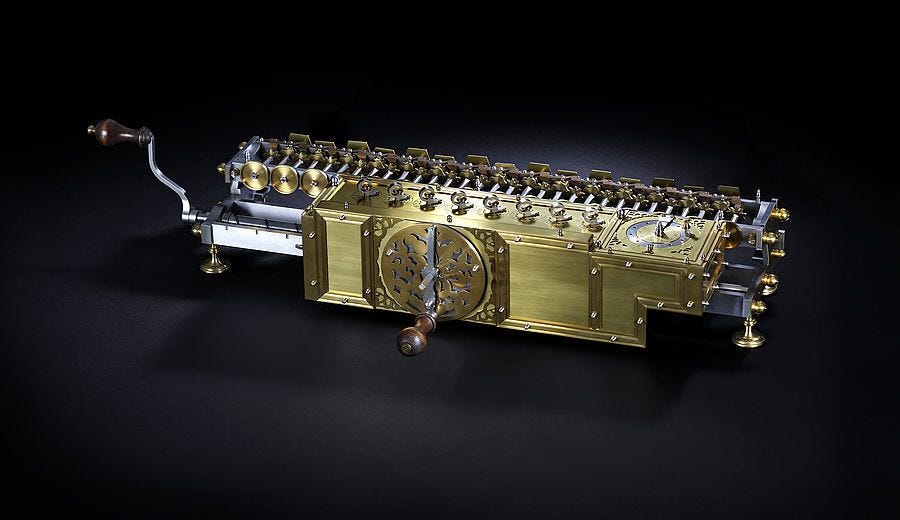

It is of course possible that conscious experiences may be realized in a silicon substrate or in a complex arrangement of string and toilet-paper rolls, just as they may be realized in brains. But do we have any evidence that the arrangements that we have come up with for the machine-processing of information are in principle the kind of arrangements that, as they become more and more complex or fine-grained, cross over into conscious experience? In fact, there is very good reason to think that the appearance of consciousness in some evolved biological systems is the result of a very different sort of developmental history than anything we have seen so far since the dawn of artificial intelligence in the mid-twentieth century (or perhaps with Babbage and Lovelace’s analytical engine in the 1830s, or Leibniz’s reckoning engine in the 1670s, or abacuses in ancient China — the impossibility of finding a terminus a quo for the history of AI is itself a good reason to suspect that what we are looking at is not a short history of artificial minds so much as a long history of artificial prostheses of our own non-artificial minds).

One need not accept that there is anything absolutely unique about the power of biological substrates to facilitate consciousness, in order to recognize that the evolutionary processes involved in its gradual emergence over the course of many millions of years do not seem to be the same processes that even our most sophisticated computer simulations are currently recapitulating. For one thing, there is good reason to think that memory is crucial to having anything like what we know as consciousness; nor is this memory anything like RAM or similar technologies, where information is stored and retrievable in a more or less uncorrupted form from its original input. Rather, as Daniel Dennett has compellingly argued (not to endorse a Dennettian philosophy of consciousness wholesale, as indeed he also broadly shares in the functionalist view, while distinguishing himself principally in his deep attention to the unique factors relevant in biologically evolved systems), what we experience as consciousness comes not only from the “multi-track processing” of different sensory inputs, each of which has its own distinctive evolutionary pedigree, and some of which are present in some other animal species, while some other animal species also have sensory inputs we lack, but also comes from a subsequent process of “editorial revision” that goes to work upon the initial multi-track recording. In effect this revision actively gives shape to our memories; what I call “my seventh birthday party”, for example, is not a high-fidelity brain-based recording of an event, so much as a story I’ve elaborated over time that bears some historical relation to that event, and that in turn serves to constitute the set of memories that give me my enduring sense of identity, which in turn is an ineliminable part of the package of my conscious experience (here I’m no longer elaborating on Dennett, so much as drawing out what I myself take to be one of the implications of the theory of editorial revision.)

If there is anything to this theory, then it becomes crucial to appreciate the circumstances in which consciousness emerged — it emerged, namely, very gradually, without forethought, through the integration of several different systems that had emerged antecedently as a result of very specific selective pressures that themselves had nothing to do with shaping consciousness. Is this entire process being simulated when we attempt to simulate the activity of the brain qua information-processing system on a computer? And if it is not, then how may we be said to be simulating the activity of the brain in a way that might even in principle be expected someday to capture and reproduce not only my belief that it is daytime right now, for example, but that can also capture and reproduce the strange experience I have in which every time I smell French fries, I immediately think of the acne on the face of a boy (Darell), who was in my eighth-grade class? This association is hard to account for, and has a lot to do with the part of my evolutionary history I share with Tyranosaurus Rex with its giant olfactory bulb and its nonexistent neocortex. Unless and until the human brain is simulated in a way that includes the evolved features that cause me to associate French fries with Darell’s face forty years after I last saw him, I am going to continue to suppose that a belle-lettristic thinker like Marcel Proust is much better positioned than any AI technician to tell us what human consciousness is.

3.

Of course, we know that AI is constantly breaking across barriers. For Norbert Wiener writing in the early 1960s, machines may indeed learn how to play chess better than human beings, but only because chess is a strategy game that relies primarily on calculation. Machine victory finally occurred in the 1990s (I saw it happen myself, strangely enough, at an exhibition match between Garry Kasparov and DeepBlue at the top of the World Trade Center in 1997). For some time after that it was supposed that chess is one thing, while a relatively more “intuitive” game like Go could never be dominated by AI. When this assumption proved wrong in turn, it seemed reasonable to infer that there could simply be no stopping the machines, that they would continue to move into ever more intuitive domains seemingly having nothing to do with calculation, even up to the intuition of Darell’s face in the presence of fries. But as Regina Rini has rightly noted, “AlphaGo [the program designed by Google to win this game] is very, very good at Go, but it is not good in the same way that humans are.” AlphaGo is making the moves we would make by intuition, but it is not intuiting; it is still just running through massive amounts of data in the same way machines do when they win at chess, and in a way that human beings never could.

There is no reason to think that as this massive data-processing gets better at doing more inuition-like things, these operations will eventually cross over into conscious experience of the sort I have when I smell French fries. Unlike chess and Go, my experience of French fries and of Darell’s face involves no output, it is not a move in a game; it just is. Nor can I prove to you that it occurs at all. You just have to take my word for it, and that word is not the experience itself, but only another sort of output. In this respect, my experience of French fries does not seem to be the sort of thing a simulation of me could ever be proven to have, and therefore whether it is possible or not seems objectively undecidable.

4.

In the end, philosophical arguments for the possibility of conscious machines —on the correctness of at least one of which the simulation argument depends— are pretty weak. That in itself is not such a harsh criticism to level — it is noble to be wrong. What makes these arguments not just bad but tragically bad is that no one would wish to make them if they were able to gain just a bit of historical perspective on what is going on. To put the point bluntly, we’ve been here before.

Every epoch finds itself tempted to take its shiny new tools, its latest technologies, and to hold them up not just as marvellous inventions, but as the clavis for understanding all of reality. In the seventeenth century significant advances in the horological art quickly translated into bold claims that the universe itself is a great “clockwork”. This is such an intro-level history-of-science fact that presumably even the most presentist technophile knows it, yet somehow it is still easy for some to imagine that twenty-first-century analogizing from artifice to nature holds a greater hope of transcending its historical moment than any comparable exercise of the early modern imagination. But the fact that we always center what we value in our descriptions of reality should give at least a moment of pause to those who think they can escape history. Other venerable traditions have variously described reality as a “book”, as a “chariot”, as a “loom”, as a “temple”, as a “horse” (one likes to suppose that the most lucid representatives of these traditions always understood they were using figurative language in order to get at some profound truth). We center what we value. In the early twenty-first century, we value computers.

The result of the willful bracketing of history, of course, is not liberation from history, but only more naked exposure to the forces shaping the historical present, for example recent popular entertainments such as The Matrix, and recent commercial endeavors such as Zuckerberg’s Meta. To be honest it’s a bit embarrassing to see philosophy operating downstream from popular culture and corporate PR, rather than approaching these overwhelmingly dominant forces critically. But this is what you could easily expect to happen on an approach to philosophy that discourages any consideration of the longue-durée historical formation of the philosophical problems we inherit, and that does not equip its practitioners with any tools for analyzing the ideological structures, those of technocapitalism notable among them, that constrain our philosophical imaginations.

In this respect the simulation argument and other expressions of technophile gnosticism are for the tech world’s postdemocratic ultraliberalism what applied ethics in the vein of Peter Singer is for run-of-the-mill establishment liberalism: an apologia, a distillation of conventional morality that pretends to come up with arguments radically at odds with popular viewpoints within the prevailing social and economic order (e.g., “We should euthanize seventy-five-year-olds”, or “Sexual contact with minors is not prima facie wrong on non-consquentialist grounds”), but at the same time, by taking this order for reality itself, as the neutral field for the picking of philosophical problems, reinforces this order at least as effectively as pious repetition of majoritarian views (“We should not euthanize the elderly”, “Sexualization of minors is abhorrent and does not represent the values of our community here at SUNY - Fredonia”).

If you already understand the context of this latter parenthetical example, I’ll speak up in passing here and say that, obviously, emphatically, Stephen Kershnar should not be fired from his position at that far-flung branch campus. Being a “gadfly” is not the only way to be a philosopher —in fact it’s one of precisely six ways, according to my 2016 book, The Philosopher: A History in Six Types—, and it’s not nearly the most interesting way. I suspect that there’s a good deal of self-delusion involved in any contemporary academic philosopher’s self-styling as a “Socrates figure”. But it’s an approach with a respectable pedigree, and for an undergraduate in Fredonia, exposure to it is surely better than no philosophy at all, which is likely the alternative we are looking at in the era of the intersection of constant manufactured online outrage and equally unabating humanities budget cuts. The problem with the work of someone like Kershnar is not that it exposes students to “harm” (who cares!), but that it purports to be research into basic philosophical problems, which his peers are supposed to take as state-of-the-art inquiry, while what it really is is a demonstration of the severe limits of analytic applied ethics as a framework for the investigation of the human good.

Defenders of the simulation argument are like the applied ethicists to the extent that they take the problems that occupy them as given rather than as historically conditioned artifacts. But they are not gadflys, as in their work they lend rhetorical support to the emerging hegemonic order, and are thus —to invoke another classificatory type from my 2016 book— a variety of courtiers. This does not mean that nothing they say will be of interest. My favorite philosopher, G. W. Leibniz, was also a courtier, and much of his work can be read as an apologia for a variety of imperial projects of the sovereigns under whom he labored or sought to labor (Louis XIV, Peter the Great). Incidentally, Leibniz also believed that the world as we see it is “virtual”, or, as he would prefer to state the view, the world is made up of well-founded phenomena that result from the sum of the perceptual activities of non-bodily substances. In parallel, Leibniz also developed a binary calculus, and recognized that there is a certain significant analogy between our encoding of information in 1’s and 0’s, on the one hand, and on the other God’s creation of the natural world out of being and non-being (this is an analogy that would remain powerful in Ada Lovelace’s writing on the metaphysics of computing in the early nineteenth century). But Leibniz knew this was just an analogy, and never made the mistake of supposing that his shiny new tools not only reflect or channel some aspect of the nature of reality, but indeed themselves tell us what that nature is.

5.

It would be a remarkable coincidence if reality itself shared in the nature of technologies that have only been around for, say, sixty years, and that likely played an important role in the shaping of the imaginations of young Elon, Nick, and Dave when they went to the arcade to play Space Invaders or when they tinkered with their home consoles. Video games shaped my imagination too —sometimes I still dream in the graphics of Donkey Kong—, but as I grew up I learned to take a critical distance to them, and also to see them in broad historical continuity with a variety of tekhnēs that the new batch of gnostics would never dream of proposing as the basis of all reality — storytelling, divination, shadow-plays. To do so would be extremely out of step with our era, and certainly would not gain any traction among the leaders of our new technocratic order.

—

Do you like what you are reading? Please consider subscribing. Subscribers receive a weekly essay by e-mail, and have access to all of this Substack’s archive dating back to August, 2020.

If you are interested in the problems discussed in today’s essay, then I strongly recommend that you order my new book, The Internet Is Not What You Think It Is.