In the Shell

This much is all true: I believe that “quality television” is in fact of extremely low quality, that “YA literature” is not literature, that “OA literature” as it were looks more and more like YA with each passing year, that superhero movies are of course not cinema and that no self-respecting adult should ever watch them, except perhaps as an expression of love to some li’l tyke in their lives. If we were living in a culture dominated by grown-ups, Martin Scorsese would be considered the purveyor of middle-brow forgettable fare rather than the gold standard of sophistication, and at least the childless among us would not even have to be aware of Spider-Man’s existence.

Somehow these commitments make me a crypto-reactionary for a whole generation of thirty-somethings with Ph.D.s and with anime avatars on their social-media accounts, even though my principal frame of analysis whenever I discuss these cultural phenomena remains Marxist through and through: they are opium for the masses, churned out by rapacious mega-corporations that do not care about society or about art. Yet in the current climate, for reasons I will never understand, to share 10% of one’s views with the political right is to invite constant entreaties and attempted seductions from the right, while to share 90% of one’s views with the left is to invite categorical ostracism from the left for not being able to get on board for that remaining 10% — even when that remaining 10% concerns “superstructural” questions of art and sensibility, and has nothing to do with fundamental questions of economic justice.

I was brutally reminded of all of this when, last week, the Chronicle of Higher Education picked up and ran a version of my most recent public ‘stack concerning, among other things, the near-total collapse of the academic humanities over the past few decades. Praise for it came almost entirely from the neighbourhood of the “intellectual dark web” and IDW-adjacent personalities, while my bien-pensant progressive peers in academia remained conspicuously quiet (most of them happen to like me, I think, more or less, and prefer to pretend not to notice when my writing in such a “cranky” key appears). Some of this coolness is just self-preservation: we’re locked into academic jobs, and in some sense the most rational individual strategy is just to continue on as if the institutions that pay us were still doing the same thing we thought they were doing when we sought out employment with them.

I have the luxury of working in France, where the crisis the university is facing is somewhat different than in the Anglophone world (and where upper administrators are less likely —I hope!— to search out critical words from a peculiar foreign professor in their system writing in a foreign language). This distance frees up my tongue to say some things about the US system that I probably would be too cautious to say if I were trapped inside it. We are of course not totally free here either from the forces that are warping higher education elsewhere, and I have myself directed MA theses in France —a country second only to Japan in its manga and comics enthusiasm— on such topics as post-humanism in Ghost in the Shell (the original of course, not the Scarlett Johansson vehicle). I’d like to think I have done so supportively and helpfully, even as I remain open about my concerns regarding the loss of a meaningful tradition of humanistic inquiry, where students are left to pick up decontextualised shards of cultural production and to make whatever sense they can from these of our common experience: post-humanism, if you will.

At the end of the day, my concern is that humanities education is subject to the same broad economic forces that are making books, movies, and everything else so infantile and worthless. And yet my progressive peers, when they see one humanities department after another getting axed by the upper administration, can come up with no better response to their students’ work on, say, how Comic Con changed their lives or why the latest iteration of Mad Max is “feminist”, than to declare: “Ah yes, the kids are alright.” (Side note: when The Who deployed this phrase in 1979, it was ironic, intended to convey the idea that the kids are actually kind of messed up.) It is my conviction that the shift in focus of students’ work in the humanities over the past years cannot be considered in isolation from the economic crunch that is bulldozing the institutional structures in which this work is being done. The turn to identity-focused and “relatable” pop-culture indulgence is a consequence of the massive bubbling of tuition and the transformation of universities principally into customer-service operations.

Spidey-studies and other such developments are rightly seen as the death throes of the humanities as we have known them. These developments are of course not the students’ fault, and it is obviously not unfairly to target the students to mount a criticism of the mismanagement of their education by administrators and faculty. To pay attention to the canary in the coal-mine is not to express any particular interest in avian pulmonary physiology, but rather in the working conditions of the miners, whose safety the owners are obligated to ensure. To pay attention to the degradation of the humanities as evidenced by the course-work of undergraduates is not to turn into an anti-woke crank, but to remain attuned to the political economy of the university and the existential crisis it faces under pressure from market forces. This is so even if the right happens to enjoy skewering humanities programs for their strange deviations. What the right does is no concern of mine.

I and Thou

My concern is to think about how to ensure the survival of the humanities in the twenty-first century. Part of the threat, of course, is that absolutely everything these days is being optimised, directly or indirectly, for social-media impact. This includes faculty decisions about university curricula, and it includes student protests against faculty decisions. It includes search-engine optimisation of media headlines, and it includes media editors’ tweets about articles about the crisis of the humanities, notably my own.

This was driven home to me once again in the way my previous newsletter was repackaged and propagated by the Chronicle. (My editor there is great; I am not criticising him, but only attempting to describe a widespread phenomenon.) The Chronicle version of the piece had an SEO-influenced title that I did not choose; the Chronicle’s Twitter feed had me defending a position that I do not in fact hold — namely, that it is good to study things like Nahuatl cosmology or Safavid veterinary texts in view of their “intrinsic interestingness”.

This is a defence of the humanities that could not possibly work, and one that I would not make. If people are already skeptical of the worthwhileness of studying something, it will generally do no good to insist to them that it is “intrinsically interesting”. The argument from intrinsic interestingness moreover has an air of trivial hobbyism about it: it makes humanistic inquiry out to be an activity suitable for someone who has no deep commitments or beliefs, and simply needs to be distracted by something or other in order not to grow completely dissolute. If this were all humanistic inquiry were, it would indeed be the special purview of an idle elite, as many already suspect it is.

Here is why I actually think humanistic inquiry should be defended: because it elevates the human spirit. Nothing is interesting or uninteresting in itself in a pre-given way. What is of interest in studying a humanistic object is not only the object, but the character of the relation that emerges between that object and oneself. What emerges from humanistic inquiry is thus best understood as an I-Thou relation, rather than an I-It relation.

Admittedly, in principle such a dyad could be achieved with Marvel comics as much as with Nahuatl inscriptions. But in reality the institutional and cultural context in which pop-culture-focused pseudo-humanities are studied ensures that the student usually remains at the level of I-I identity, which is not a relation at all but pure narcissism, or at best attains a sort of I-Us community, where he or she can bask in the like-mindedness of other comics fans: in other words, academic studies as an institutional buttress for what youth subcultures have always been perfectly able to achieve in a much more anarchic way. (Thank God there was no option for goth studies when I began college in 1990 — in any case I was already an expert.) Humanistic inquiry is not fandom; it is a basic category mistake to suppose that it is. Conversely, the indulgence of a young person’s prior identification as a fan can seldom result in humanistic inquiry, even if the object of her or his fandom is not in principle excluded from the list of humanistic inquiry’s possible objects.

It is this emphasis on the cultivation of an I-Thou relationship to one’s object of study that also saves the present defense of “traditional” humanities from accusations of gratuitous high-browdom or thoughtless cultural conservatism of the sort for which E. D. Hirsch Jr. was rightly ridiculed a generation ago. It is not that there are some artefacts of human cultural creation that are timelessly superior to others, and still less that such artefacts have been more intensively produced in Europe. It is rather that you must latch onto something outside of and alien to the demotic culture that you have inherited and taken for granted in your early life, in order to have the sort of experience of radical difference that catalyses a full and deep second-person relationship to the object of your study.

Esoteric traditions, such as those of priestly classes —including the priestly class of high-mandarin academics—, have historically been very good at preserving bodies of knowledge particularly well-suited to such a relationship. When Richard Wollheim taught me about Freud’s case-study of “the Rat Man” in the seminar I took with him on “Philosophy and Psychoanalysis”, I felt like I was being initiated into something secret and powerful, and totally alien from the vulgar pop-psychology I had gleaned from Phil Donahue and People Magazine in my earlier years. It does not really matter that in the end Freud didn’t have the slightest idea what he was talking about. It was enough just to be made aware that there are ways of accounting for human behaviour and motivation that Donahue could not address, to learn that People had barely scratched the surface of people.

It is this esotericism that often gives indoctrination into a learnèd tradition an air of elitism. But there is no reason, other than lack of will, and other than intellectual and moral cowardice, to suppose that mastering a tradition or a body of knowledge that was not part of your upbringing, or of the popular culture you lived and breathed as an adolescent, is intrinsically a privilege of the elites, while the less fortunate masses can only afford to keep on learning about things they already know, things that are “relatable”. This neologism is a gross misnomer, for in fact no true relation is being established. In order to establish a relation you must recognise the irreducible otherness of the thing with which the relation obtains.

Does it sound fanciful to you to suggest that one might relate to a Mexica calendar or a Leibniz manuscript as to an “other” in the robust second-person sense understood by phenomenological thinkers such as Martin Buber? For review, recall that one of the great lacunae of early modern European philosophy is that it generally left out the second-person experience. Descartes razed the foundations of his knowledge, and then by a process of methodological skepticism was able to reestablish, in succession, his own existence, then the existence of God, then the external world. Early in the 1641 Meditations he had mentioned a “man” in a hat and cloak whom he thought he saw in the street from his window, who was also eliminated in the process of radical doubt, but whose existence, unlike that of God, the world, etc., was never reestablished. Descartes left his fellow man out in the street!

This sort of oversight positioned subsequent modern philosophy for the most part with an overwhelming focus on I-It relations, on how we know the world exists and how we access truths about the world. And it was only much later on, with the rise of phenomenology at the end of the nineteenth century, that philosophers began to appreciate how deeply different the experience of, say, being in a room with another person is from being in that same room with a couch or a pile of sawdust.

This difference was in various ways held to be revelatory of our ontological predicament as human beings. Not just Buber, but also Martin Heidegger, Jean-Paul Sartre, and Maurice Merleau-Ponty, made their careers out of analysing this revelation. Sartre made much of the experience of peeping through a keyhole at a pair of lovers and realising he is being peeped at from behind in turn, and similar kinky scenarios. This all looks like the delirious phantasms of a very different cultural moment, but Sartre is getting at something deeply important here: voyeurism is a crime and a transgression; looking into someone’s house to check out their furniture is of an altogether different character, and this difference tells us something about our place in the order of reality.

A Second-Person World

And yet, here’s something that at least some people understood perfectly well long before the phenomenologists became preoccupied with “the other”: there are conditions under which that role of otherness, that second-person Thou status, does not need to be held by a person, or even an animate being at all, whether animal, angel, or God. It is enough, for an object of study to become the other pole of an I-Thou dyad, that one become sufficiently attentive to it, and, in attending to it, that one become aware of the moral dimensions of the emerging relationship.

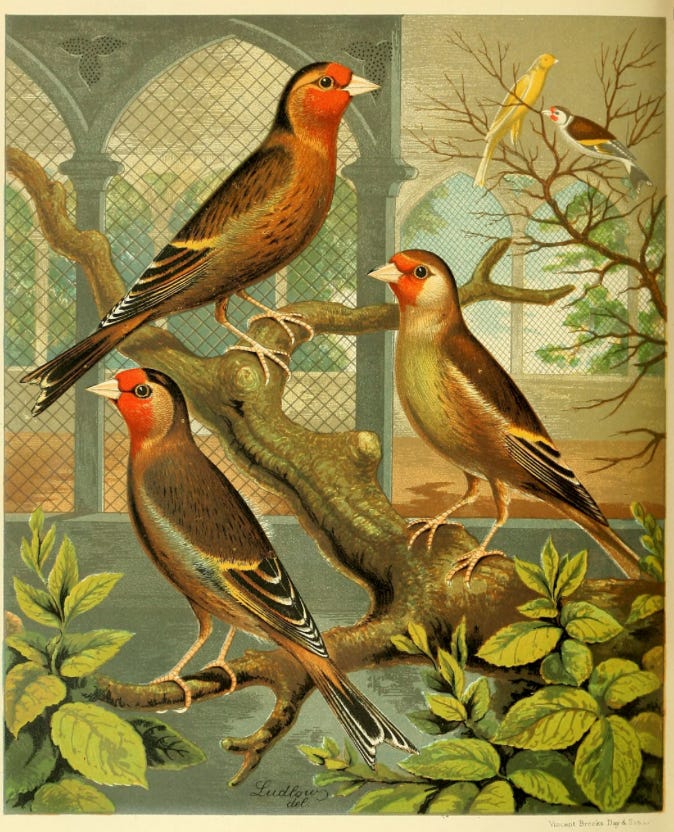

The idea of study of an external object as an occasion for moral self-cultivation is one that was familiar in both the human and natural sciences across much of the early Enlightenment. Lorraine Daston vividly describes, for example, the transformations experienced by eighteenth-century naturalists such as Charles Bonnet in the course of their observations on the life-cycles of aphids. If you stare at an aphid for days on end, your entomology becomes not just a science of insects, but also a practice of meditation, a spiritual exercise, if you will, in which the aphid plays a role that, in another century and another tradition, might also be played by a crucifix.

In the twenty-first century, when attention to nature has been entirely engulfed by the methods and incentives of institutionalised natural science, the idea that nature could be a “moral authority”, and that it could play a role in our moral cultivation, seems particularly foreign — even more foreign than the rapidly fading idea that Homer or Cicero could play a role in the shaping of the moral individual.

In this respect the fate of science might serve for us as an early warning and as a harbinger for what a world without the humanities will look like. There was a time not so long ago when people consciously cultivated their moral subjecthood through the way they attended to and described the natural world; they wrote books with titles like The Theology of Snails to demonstrate the ways in which attention to the symmetries of a gastropod shell can serve as an aid to the cultivation of piety and right conduct. The authors of such works were, by the era’s standards, “scientists” (though that term would only be coined in the 1830s). Today if a scientist takes an interest in a snail this is most likely with an eye to hacking open its genome with CRISPR and making it do new and wondrous things for biomedical technology.

Even after the study of nature fell to the imperative to find concrete solutions to practical problems, even after we stopped paying attention to nature, for some period of time we continued to pay attention to things like poetry. One way of understanding what is happening in the present moment is that poetry is going the same way as snails: the humanities are losing their connection to the project of moral self-cultivation, just as natural science already did over the course of the nineteenth century, roughly speaking after the death of Goethe.

Objects of Care

So it is possible to cultivate an I-Thou relationship with an aphid — Bonnet is reported to have experienced genuine sadness when the ones he studied passed on. If you have spent a whole summer trying to grow cucumbers, and just one little runt appears by the end of the season, you might be familiar with the experience of an I-Thou relationship with a being from the vegetal world, expressed in a hesitation to destroy the cuke you worked so hard to bring into being by putting it in a salad. You might at some point even have kicked a friable dirt clod while crossing a field, watched it break into pieces, and immediately regretted that wanton destruction of something which, if not exactly a being by most metaphysical reckonings, at least had some integrity to it that made it, too, a sort of other, and worthy of moral consideration. Such consideration, again, no longer has anything to do with what we think of as “science”, even if it concerns the animal, vegetable, and mineral kingdoms.

The humanities, when they are doing what they are supposed to be doing, are concerned with the awakening of such moral consideration for objects of human creation, past or present, where previously we would not have noticed that this consideration was merited. Traces of human intention transferred into artefacts or texts — these cry out for attention too, and it is the moral calling of the humanist to pay it.

Much has been made by certain German and French thinkers of the etymology and resonances of the word thing. A thing is very different from an object. In its most archaic sense it is a political body, and the word means something that might best be translated as “council”. This notion survives in some Germanic languages, as in the Icelandic term for the national parliament, the Alþingi — literally, the “common thing”. By extension, and gradually, “thing” comes to be applied to material objects or collections of objects that for some reason or other have become salient within human social reality. A thing is whatever is taken up as an object of concern or care.

One way to state my argument concerning the humanities is that humanistic inquiry should not have “objects of study” (although this is how I have myself been speaking until now), but only things. To take an interest in something (aphids, Virgil’s bucolic poetry, Mexica calendars) is to relate to it with the sort of solicitude that no mere object of scientific study in the post-nineteenth-century sense can be said to have. This solicitude comes not from the thing’s pre-given features, no more than our love for another person comes from a survey of that person’s virtuous traits. It comes from a moral disposition that regards the stuff of humanistic inquiry in the same way as we generally acknowledge we should regard actual human others — as worthy of our care not because they are unusually excellent people, but simply because these are the people we have taken up as the focus of our attention.

Pronouns

Among the many reasons I despise the habit of “stating one’s pronouns”, and resent being pressured to do so in professional settings, is that my preferred pronouns are not generally included in the list of options. I am not being facetious when I say this. Think for a moment about how strange it is to specify to other people which third-person pronouns you would like for them to use when they are talking about you, but not to you, as if this were the primary communicative context in which you might be expected to come up.

It is particularly strange, given that there are some cultures (notably, the one I live in) in which there are few things more socially gauche than to refer to a person as he or she, not because to do so is a form of gender essentialism, but because it is generally understood that if you want to talk about a person you should invoke that person by name. Pronouns stand in for nouns, but when it’s a question of proper nouns, and indeed no less than the proper nouns designating beings as particular as individual humans, why settle for the stand-in?

But more importantly, again, why leap right to the imagined scenarios in which we are being talked about, rather than encouraging others to think of us as the sort of being it is most appropriate to talk to? Treat me as a thou, I mean, and I assure you, the he or she or they of me will retreat into the background. It is almost as if this fashionable new emphasis on the third-person pronouns by which we talk about other human beings is announcing, unconsciously, the death of the humanities as a moral mode of engagement with other human selves. How can we sustain an I-Thou relationship with the things we study if we can’t even sustain it with the people who are in the same conference room pretending to be studying alongside us?

Attention

Nor am I being facetious when I say that it may be time to start thinking about alternative institution-building. If universities don’t want to teach and to preserve real humanities, then those of us who do may have to go and do so elsewhere under a different arrangement, one that permits us to pay attention to the things we study in the way that they merit.

This would not be the first time. From roughly 1610 until about 1780, universities were not the centre of the action — newly founded scientific academies and informal salons were. The broad historical shift underway right now echoes the one that was happening then: both periods are characterised by tremendous instability resulting from a revolution in information technologies. The early modern period endured what Ann Blair calls a crisis of “information overload” at least as significant as our own.

We might be able to preserve university-based humanities — I think this is at present an objectively open question. But we are not going to do so if we keep acting like the dog in the meme of the house on fire who smiles and says, “This is fine”. Nor should we be cowed into saying “This is fine” by the truly perverted suggestion that to say anything else plays into the hands of the reactionary press, or is unfair to the students, or is just so much cranky contrarianism.

These suggestions are either false or irrelevant. The historical shift currently underway is massive. The ridiculous stories that the anti-woke hucksters and tabloids love to pick up and ridicule are in fact just the faintest epiphenomena of this massive shift. While the culture-warriors on both sides remain focused on the epiphenomena, the massive shift is subducting humanistic inquiry so far beneath the ground, so fast, that most of us can’t even find the words to account for its disappearance.